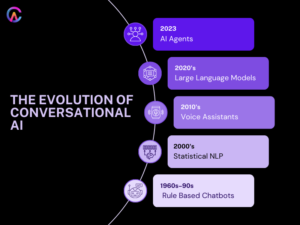

Conversational AI has come a long way, evolving from basic rule based chatbots with scripted responses and simple NLP pattern matching to advanced AI agents that can make autonomous decisions, engage in multi step reasoning, and even remember past interactions. These sophisticated systems can handle multi modal interactions, integrate tools, and orchestrate external APIs to execute complex tasks.

Take early chatbots like ELIZA from 1966, which used pattern matching to simulate a psychotherapist. They had a limited vocabulary and offered rigid responses. Fast forward to today, and we see the evolution through statistical NLP, machine learning, and transformers, leading to large language models (LLMs) and multimodal foundation models. These advancements have paved the way for agentic architectures that enable conversations that feel human like, with context awareness, emotional intelligence, and the ability to assist proactively in achieving goals.

The evolution of conversational AI also focuses on semantic clustering and topical authority, targeting search intent. As we look ahead to 2026, we can see a clear distinction between chatbots and AI agents, with a timeline that highlights the rise of conversational AI, driving SERP featured snippets and AI generated answers, all while optimizing for answer engine signals like Experience, Expertise, Authoritativeness, and Trustworthiness.

Going back to the 1960s and 1990s, rule based chatbots relied on keyword matching and template responses, leading to fragile and limited conversations. However, the 2000s brought about a shift with statistical NLP, probabilistic models, intent classification, and entity extraction. The introduction of deep learning and transformers in 2017, with attention mechanisms and self attention, allowed for parallel processing and massive context windows, enabling human like text generation and understanding.

Generative AI, like GPT 3 from 2020, and multimodal models such as GPT 4 and Gemini, have integrated vision, language, and audio, creating agentic systems capable of autonomous planning, memory, tool use, and external execution. This represents the pinnacle of conversational AI, allowing for proactive multi step task completion that goes beyond just reactive question answering.

Early Era Rule Based Chatbots Pattern Matching Limitations 1960s 1990s

The roots of conversational AI can be traced back to ELIZA, created in 1966 by Joseph Weizenbaum at MIT. This early program simulated a psychotherapist using pattern matching, keyword extraction, and template responses, paving the way for human computer interaction, even though it had its technical limitations. ELIZA could recognize phrases, extract keywords, and map them to predefined responses, creating the illusion of understanding through reflective questioning, much like a patient therapist dynamic. However, it struggled with complex queries, context switches, and the emotional nuances of language due to its limited vocabulary.

Fast forward to 1972, and we have PARRY, which aimed to simulate a paranoid personality. It used similar pattern matching techniques to engage in conversation and could even pass some rudimentary Turing tests. However, it had a limited emotional range and often fell into repetitive patterns, making it hard to maintain a natural flow in conversation or adapt and learn from interactions. Then came ALICE in 1997, the Artificial Linguistic Internet Computer Entity, which employed pattern matching and heuristic scoring to facilitate natural language conversations. It even won the Loebner Prize but still faced challenges with context memory, had a rigid personality, and struggled with extended multi turn conversations due to its domain specificity.

Rule based chatbot characteristics fundamental limitations

- They rely on keyword pattern matching and rigid template responses, leading to fragile and brittle conversations.

- Their vocabulary is limited, and they operate on a fixed knowledge base without any learning or adaptation capabilities.

- They lack context memory, resulting in stateless conversations that reset with every interaction.

- Their domain specificity restricts them to narrow conversation scopes, often sticking to scripted scenarios.

- They create an illusion of understanding through reflective questioning, but this is merely surface level pattern recognition.

Despite these technical limitations, rule based systems have established foundational paradigms for conversational UIs, interaction patterns, and user expectations, proving their viability as a basis for future advancements in human computer conversation, particularly with the rise of statistical machine learning and transformer based architectures.

Statistical NLP Era Intent Classification Entity Extraction 2000s 2010s

Statistical natural language processing has completely changed the game for chatbots. We’re talking about probabilistic models, intent classification, named entity recognition, slot filling, and managing multi turn conversations. Remember SmarterChild from 2001? That AOL and MSN messenger chatbot could handle weather updates, sports scores, movie times, and even basic tasks, but it relied on statistical models for intent classification and had pretty basic context management, which limited its domain coverage and personality engagement.

Fast forward to Siri in 2011 with the Apple iPhone 4S, which brought statistical NLP into the mix with intent classification and integration with Wolfram Alpha. It could manage location aware services, calendar appointments, and reminders, but it still struggled with natural conversation, especially in multi turn contexts, emotional intelligence, and dealing with different accents and noisy environments. Then there’s Google Now from 2012, which evolved Google Search with contextual cards and predictive assistance, but it also faced limitations in being proactive and often just reacted to queries.

Statistical NLP chatbot advancements persistent limitations

- Intent classification and probabilistic models for dialogue state tracking in multi turn conversations

- Named entity recognition, slot filling, and parameter extraction for structured data

- Context management with limited memory and conversation history

- Domain specific integrations like Wolfram Alpha, APIs, calendars, and location services

- Reactive assistance that lacks proactivity and struggles with personality engagement and natural conversation flow

Statistical NLP lays the groundwork for enterprise chatbots, powering customer service FAQ bots, e-commerce assistants, and banking virtual agents. However, there are still challenges when it comes to natural conversation, especially in narrow domains and scripted flows, which are crucial for establishing the commercial viability of conversational interfaces..

Voice Assistants Era Multimodal Conversational Interfaces 2010s Early 2020s

Back in 2015, Amazon introduced the Echo devices, which kicked off a race in the voice assistant arena alongside Google Home, Microsoft’s Cortana, and Apple’s Siri. These platforms have evolved to dominate the consumer landscape, focusing on conversational AI and voice first interfaces that control smart homes, stream music, manage shopping lists, set reminders, and create routines. They offer a wide range of skills and actions, covering multiple domains. Alexa Skills and Google Actions have opened the door for third party developers to enhance functionality, providing everything from weather updates and news to games and productivity tools, all while integrating seamlessly with smart home devices and IoT controls.

Voice assistants are now part of our everyday lives, connecting various device ecosystems like smart speakers, cars, TVs, refrigerators, and wearables. This creates an ambient computing environment where they’re always listening and ready to assist, helping us automate routines and coordinate multi step tasks, even if their reasoning abilities and transactional capabilities are somewhat limited.

Voice assistant characteristics ecosystem dominance

- Voice first interfaces that excel in natural language understanding and speaker identification, along with multi room audio capabilities.

- Smart home IoT control for lights, thermostats, locks, security cameras, and more, all automated through routines.

- A vast array of Skills and Actions from developer ecosystems, offering millions of third party conversational experiences.

- Conversational commerce features that help with shopping lists, reordering items, product recommendations, and transactions.

- Ambient computing that’s always listening, context aware, and capable of coordinating across multiple devices.

Voice assistants have made conversational interfaces a norm, leading to mainstream consumer adoption and establishing commercial viability. This has resulted in multi billion dollar ecosystems, even with their limitations in conversation depth and reasoning. They lay the groundwork for more advanced agentic multimodal architectures in the future.

Transformer Era Large Language Models Human Like Conversations Late 2010s 2020s

The Transformer architectures from 2017, particularly the “Attention Is All You Need” model, introduced self attention mechanisms and parallel processing, which opened the door to massive context windows. This was a game changer for NLP, paving the way for models like GPT 2 in 2019 and GPT 3 in 2020, which excelled at generating human like text. They brought us zero shot and few shot learning, along with the ability to follow instructions, all while encoding vast amounts of knowledge with billions of parameters. By 2022, ChatGPT from OpenAI made conversational LLMs mainstream, enabling natural and engaging conversations, complex reasoning, and multi turn context, which sparked a boom in conversational AI for both enterprises and consumers.

LLMs showcase some impressive emergent abilities, such as few shot learning, chain of thought prompting, complex reasoning, instruction following, code generation, translation, summarization, and question answering. Their massive scale allows for generalist conversational capabilities, while specialized domain tuning and fine tuning, along with retrieval augmented generation (RAG), help integrate external knowledge and reduce hallucinations.

Transformer LLM characteristics conversational revolution

- Self attention, parallel processing, and massive context windows lead to human like text generation.

- Few shot and zero shot learning, along with instruction following, highlight their emergent abilities at scale.

- Chain of thought prompting enables complex reasoning and step by step problem solving.

- Retrieval augmented generation (RAG) allows for external knowledge integration and helps reduce hallucinations.

- Fine tuning and domain adaptation enhance specialized conversational capabilities for various enterprise use cases.

LLMs are transforming chatbots into general purpose conversational engines that can handle complex tasks, reasoning, and knowledge synthesis, all while fostering natural and engaging dialogue. This lays the groundwork for agentic systems capable of autonomous execution.

Agentic AI Era Autonomous Decision Makers Multi Step Execution 2023 2026

AI agents are the pinnacle of conversational AI, functioning as autonomous systems that are goal oriented. They excel in multi step planning, have long term memory, and can integrate tools while orchestrating external APIs. These agents collaborate with one another, reflecting on their memory and continuously improving. They break down complex goals into manageable tasks, execute actions using external tools, APIs, and databases, and autonomously navigate multi step processes to achieve their objectives without needing human intervention.

Frameworks like LangChain, LlamaIndex, AutoGPT, and BabyAGI empower these agentic workflows, allowing for tool calling, memory management, planning, reflection, and autonomous operation through a conversational interface. This decouples the conversation from task execution, paving the way for sophisticated autonomous systems.

Agentic AI characteristics autonomous conversational systems

- Achieving goals autonomously through multi step planning, decomposition, execution, and orchestration.

- Integrating tools, external APIs, databases, and workflows for completing multi step tasks.

- Utilizing long term memory to track conversation history, user preferences, and learned behaviors for reflection.

- Collaborating with multiple agents, coordinating specialized teams to tackle complex problems.

- Focusing on self improvement through continuous learning, adapting based on user feedback, and optimizing performance.

Agentic systems are capable of executing intricate workflows, such as booking travel, managing schedules, conducting research, and generating reports. They handle multi step e-commerce tasks and customer service operations autonomously, representing a significant evolution in conversational AI.

Multimodal Conversational AI Vision Language Audio Integration

The GPT 4o Gemini 1.5 multimodal foundation models seamlessly blend text, vision, audio, and real time speech translation. They enhance our understanding of images and videos, allowing for engaging multimodal conversations and interactions that feel genuinely human. These advanced agents can analyze documents, images, diagrams, charts, and audio conversations, producing rich outputs that include text, speech, and visual presentations for a comprehensive understanding.

In the real world, these capabilities shine in areas like healthcare diagnostics, medical imaging analysis, radiology reports, legal document reviews, contract analysis, and even financial charts and dashboards. They also play a crucial role in customer support, offering visual troubleshooting and audio diagnostics.

Multimodal AI capabilities natural conversations

- Vision and language understanding, image analysis, document comprehension, and diagram interpretation

- Audio processing, speech translation, emotion recognition, and speaker identification

- Real time multimodal interactions, video analysis, live camera feeds, and screen sharing

- Generating multimodal outputs like text, speech, visual presentations, and comprehensive responses

- Enterprise applications in healthcare diagnostics, legal reviews, and financial analysis

By integrating multimodal elements, we can create conversations that feel more human like, breaking through the limitations of traditional text interfaces and establishing conversational AI that truly acts like a realistic assistant.

Enterprise Conversational AI Industry Transformation

Enterprise conversational AI is reshaping various areas like customer service, sales, marketing, internal productivity, knowledge management, compliance, and automation. It’s all about creating autonomous agent workflows, enhancing multi agent collaboration, and leveraging industry specific domain knowledge to ensure regulatory compliance. We’re also seeing advancements in conversational commerce, with autonomous purchasing and recommendation engines that streamline supply chain coordination, boost customer success, and enable proactive outreach to predict and intervene in churn.

In the HR realm, conversational AI is revolutionizing recruiting, interview scheduling, onboarding, employee support, compliance training, personalized career development, and internal knowledge management through conversational search and document analysis.

Enterprise conversational AI transformation areas

- Customer service: enabling autonomous resolution, managing multi step workflows, and predicting escalations.

- Sales and marketing: enhancing conversational commerce and facilitating autonomous purchasing with recommendation engines.

- Internal productivity: improving knowledge management and document analysis through conversational search.

- Compliance: automating regulatory reporting, creating audit trails, and developing conversational compliance assistants.

- HR: streamlining recruiting, onboarding, employee support, and career development.

Implementing these enterprise solutions can lead to a remarkable 40% reduction in costs, 35% faster resolution times, and a 28% increase in customer satisfaction, all while promoting autonomous operations, continuous learning, and domain adaptation.

How Codearies Helps Customers Build Next Generation Conversational AI Agents

Codearies offers top notch conversational AI platforms that are revolutionizing chatbots, autonomous AI agents, and multimodal systems. We focus on tool integration, memory management, and collaboration among multiple agents to provide industry specific solutions for customer service, sales, HR, and compliance automation.

Agentic AI platforms for autonomous execution

Our autonomous AI agents are designed for multi step planning, integrating tools, external APIs, databases, and workflows. They utilize long term memory, conversation history, and user preferences to reflect, improve, and learn continuously. With multi agent collaboration, our specialized agents work together to tackle complex problems.

Multimodal conversational AI for natural interactions

We integrate vision, language, and audio with cutting edge technologies like GPT 4 and Gemini. This allows for real time speech, speech translation, image analysis, document comprehension, and video understanding, resulting in multimodal output generation that includes text, speech, and visual presentations. Our goal is to facilitate natural, human like conversations in areas like healthcare diagnostics, legal reviews, and financial analysis.

Enterprise conversational AI tailored for industries

Our solutions for customer service enable autonomous resolutions through multi step workflows. We also enhance sales with conversational commerce, streamline HR processes with conversational recruiting, and improve internal knowledge management. Our compliance automation includes conversational search, document analysis, regulatory reporting, and personalized employee support for career development.

Scalable conversational AI infrastructure

We provide a cloud native, scalable infrastructure that supports real time processing of millions of concurrent conversations with low latency. Our global edge deployment ensures multi language support, localization, and cultural adaptation, all while maintaining enterprise security and compliance with HIPAA, GDPR, and SOC2 data sovereignty. Continuous learning and adaptation are at the core of our approach.

Rapid deployment for conversational AI acceleration

We can deliver a minimum viable product (MVP) for conversational AI in just six weeks, with enterprise grade solutions ready in three months. Our focus on continuous optimization, A/B testing, performance monitoring, and user satisfaction analytics ensures autonomous improvement, helping you maintain a competitive edge in the realm of conversational AI leadership.

Frequently Asked Questions

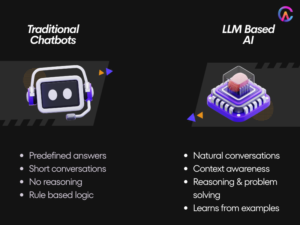

Q1: What’s the fundamental difference between chatbots and AI agents in terms of their autonomous capabilities?

Chatbots are designed to respond reactively, relying on scripted answers and pattern matching through statistical NLP. They have limited context memory and simply react to user inputs by following predefined flows. On the other hand, AI agents are proactive and can plan multi step processes, integrate tools, execute tasks externally, and possess long term memory. They reflect and improve themselves to achieve complex goals independently, marking a significant evolution in conversational AI. Codearies is at the forefront, building autonomous AI agents that manage multi step workflows, tool integration, and memory management, enabling enterprise grade conversational AI for customer service, sales, and HR automation.

Q2: Why does multimodal conversational AI represent the next step in natural interaction?

Multimodal AI combines text, vision, audio, real time speech, speech translation, image analysis, and video comprehension, allowing for conversations that feel more natural and human like, going beyond the limitations of text alone. Codearies is leading the way with multimodal conversational AI that integrates vision, language, and audio, offering real time interactions in areas like healthcare diagnostics, legal reviews, financial analysis, and more, all while maintaining that human touch in enterprise applications.

Q3: What impact does agentic AI have on autonomous execution in enterprises?

Agentic AI breaks down complex goals and autonomously executes multi step workflows, integrating tools and external APIs and databases to achieve objectives without human intervention. This approach can lead to a 40% reduction in costs and a 35% faster resolution time. Codearies implements agentic AI platforms that focus on autonomous planning, memory reflection, and multi agent collaboration, providing industry specific solutions for customer service, sales, internal productivity, and compliance automation.

Q4: What are the security and compliance requirements for conversational AI in enterprises?

Enterprise conversational AI requires HIPAA GDPR SOC2 compliance data sovereignty conversation encryption PII protection audit trails continuous monitoring regulatory reporting. Codearies delivers enterprise grade conversational AI security compliance cloud native infrastructure data sovereignty multi language localization continuous learning adaptation preserving regulatory compliance enterprise deployments.

Q5: What does the future hold for the evolution of conversational AI with agentic multimodal architectures?

Future combines agentic autonomy multimodal naturalness continuous learning self improvement industry transformation customer service sales HR automation. Codearies builds future proof conversational AI platforms autonomous multimodal scalable enterprise ready preserving competitive advantage conversational AI leadership technological evolution.

For business inquiries or further information, please contact us at